Sustainable CNN for robotic: An offloading game in the 3D vision computation

Abstract

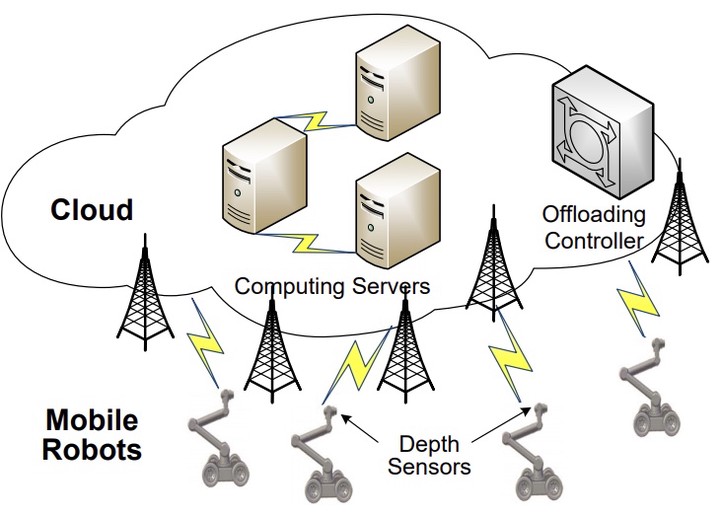

Three-dimensional (3D) scene understanding is of great significance to many robotic applications. With the huge development of the deep learning methods, especially the convolutional neural network (CNN), 3D robotic vision has achieved a satisfactory performance. However, in most scenarios, sustainability becomes a severe problem, and few existing approaches pay enough attention to energy consumption. In this paper, we propose an energy-aware system for sustainable robotic 3D vision. Our contributions mainly include: 1) an effective CNN model for the 3D scene understanding; and 2) an offloading strategy to make the deep model more sustainable. First, we design a deep CNN model to analyze the 3D point cloud data. The proposed model contains 92 layers for a state-of-the-art recognition accuracy, which, however, bring a big burden to the computing hardware. Then, we formulate this deep learning computation problem as a non-cooperative game, and adopt a heuristic algorithm to balance the local computing and cloud offloading, in order to obtain an optimal solution, in which both the efficiency and energy-saving are taken into account. Simulations demonstrate that our approach is robust and efficient, and outperforms the state-of-the-art in several related tasks.